I'm Feeling Lucky

It's Been A Minute

I hope everyone has been doing well. To start, I apologize for the length of time between this post and my last one. I do not expect to go nearly as long between this post and the next!

It's been a busy year in markets, and it's been far more productive to be making investments vs. writing about my investing. Additionally, I ran into some issues with the platform I was planning to use to circulate these posts. It took me a little while to muster the activation energy needed to setup another forum to post on. However, now everything is up and running.

Recently, I've been having some good conversations with people about Google. After a quick portfolio update, I wanted to share some of those thoughts below. Let me know if it sparks thoughts in any of you! Always open to pushback – that's the goal of trying to learn in public.

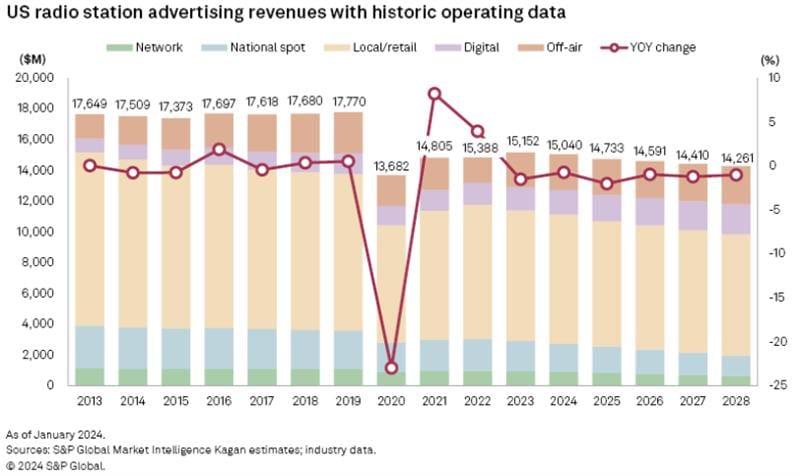

Snowball Update

From a returns perspective, this has been an acceptable year. So far, my personal portfolio ("Snowball") has outperformed the S&P 500 by approximately 13 percentage points (through the end of July), while I've held 10-20% cash throughout the period. A large portion of this outperformance is due to luck. Seven months is simply too short of a period over which to draw meaningful conclusions with respect to investment performance. However, to quote the Yankees pitcher Lefty Gomez, "I'd rather be lucky than good."

Alphabet (GOOG/GOOGL)

One of the most controversial positions in my portfolio is Alphabet, otherwise known as Google (GOOGL). I've talked about it with a number of you recently, and figured it might make sense to fully flesh out some of my recent thoughts around the stock and business in order to prompt debate.

Google has been a consensus short since OpenAI released ChatGPT in the fall of 2022. The short thesis is straightforward.

Google built the most profitable business in the history of the world by cataloguing the world's information, constantly indexing it, quickly retrieving it, and serving ads with incredibly high intent (i.e., if I search for "car insurance," there is a very high likelihood I am looking to buy car insurance). This business benefited from scale economics (we know from the DOJ's Search Antitrust case that Microsoft has spent over $100B on Bing/Bing's index) and strong feedback loop effects (more queries -> more user logs -> more clicks data -> more relevant organic searches -> more monetizable ads -> more profits -> more investment in the index & search technology -> better search results -> more queries).

ChatGPT and other large-language models (LLMs) threaten these advantages. Instead of serving you "10 blue links," where you have to search for the answer – these models have effectively been trained on everything written in the history of the world and are able to almost instantly provide you with an answer. In many ways, it's the fulfillment of the "I'm feeling lucky" button – instantaneous information retrieval. By circumventing the "10 blue links," this format bypasses Google's primary monetization engine as there is no opportunity to show an ad. Google is left with a classic innovator's dilemma – should Google (and to what degree?) disrupt their own search business (again, the most profitable business in the history of the planet Earth) in order to better compete in this new modality? And even if they logically "should," will a bureaucratic organization like Google have the intestinal fortitude and agility necessary?

Oh, and did I mention that any day now a Federal judge will decide whether or not Google can even continue to pay its largest distribution partner (Apple) a revenue share in the future? Or if it needs to divest Chrome & Android? Or if Google needs to share all of its search-related data and algorithms with its competitors?

One can easily see how it would be hard to own Google.

However, I want to lay out a few of the primary arguments below as to why I think Google is an attractive investment (and was a particularly attractive risk/reward when I materially increased my position when the stock traded near ~$155 a few months ago).

- Technology Layering

At this point it is obvious that AI-related search is growing rapidly and is going to be a massive category. We still aren't anywhere near the "endpoint" or mature modality for AI search. I imagine the endpoint is likely some combination of voice search (you have an AI you're talking to) + wearable tech that enables vision search. For now though, AI chatbots are growing rapidly. The key question is, "Does this disrupt Google search?"

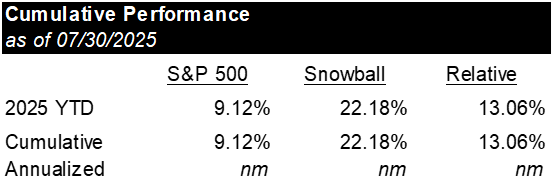

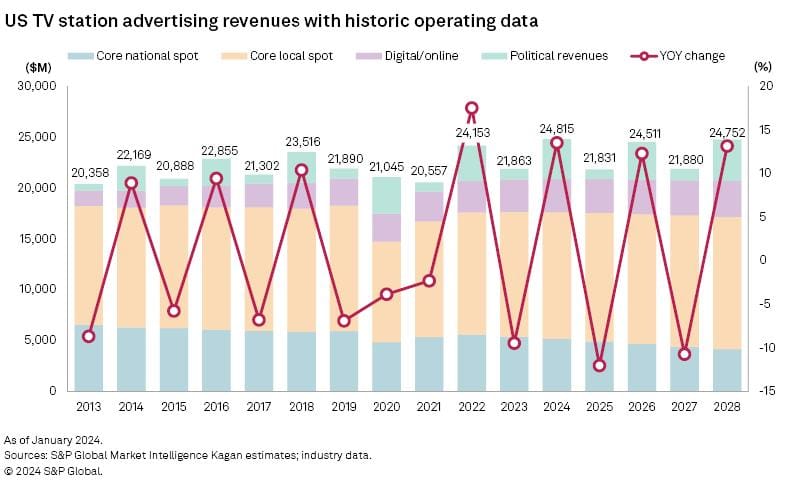

I have a mental model for technology shifts: often, the new technology doesn't displace the old technology, but rather, layers in on top of and takes all incremental share. This isn't always the case (we are making a whole lot fewer horse buggies now than we were in 1905); however, I've included a few noteworthy instances of this happening below.

U.S. TV Station Advertising Revenues (historical and estimated)

U.S. Radio Station Advertising (historical and estimated)

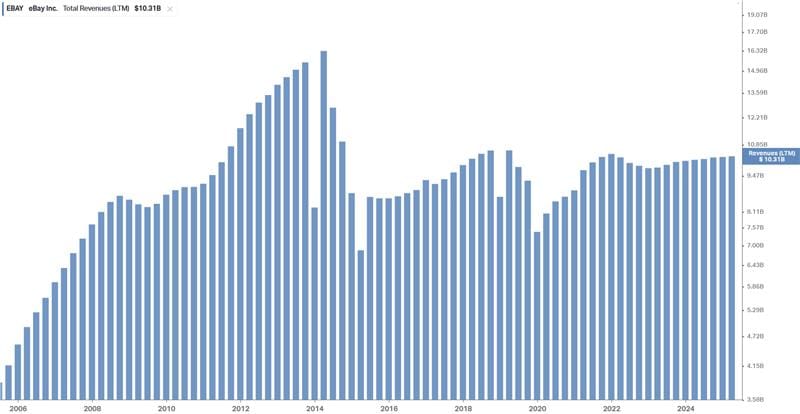

eBay Total Revenues (note that PayPal was spun out in 2015)

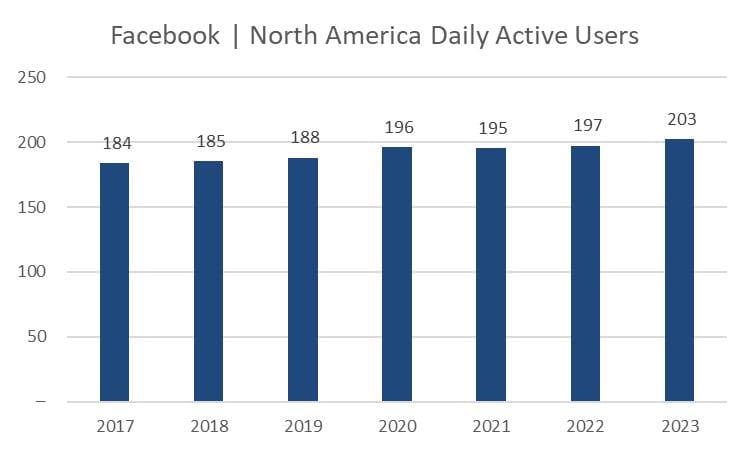

Facebook Daily Active Users (post Cambridge Analytica, "Delete Facebook" movement, and the rise of Instagram and TikTok)

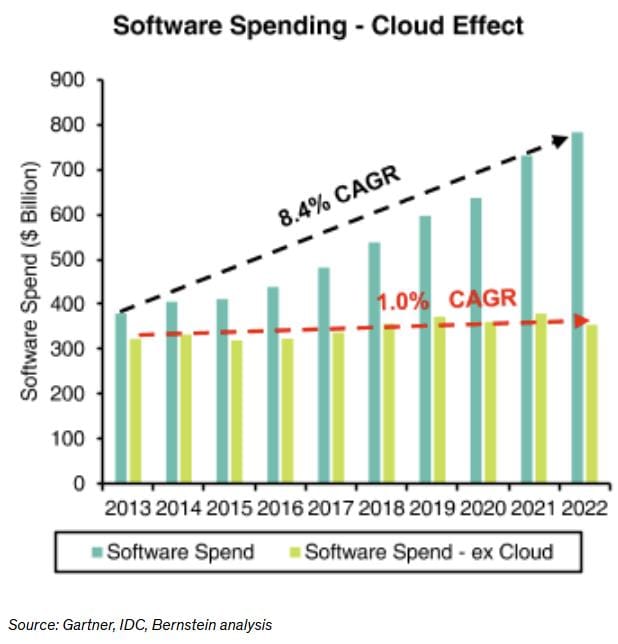

On-Prem Software Spend

In fact, Google's own history has been one of new media layering in on top of Search and taking share (rapid growth of Amazon and Meta advertising). Google did $206B of Search and YouTube ads revenue in 2024 vs. Meta and Amazon at a combined ~$216B of revenue. Over the past 14 years the combined Amazon + Meta have built an advertising business larger than Google, but Google grew Search revenue healthily throughout the period.

Furthermore, I've asked a number of people to download their search history over the prior 5 years in order to analyze it. The upshot is that I haven't seen anyone yet (sample of ~20) who is seeing any type of discernible slowdown in their search history, and most/all individuals view themselves as LLM early adopters. While I don't want to over extrapolate from a small sample, these results are supportive of the "layering" hypothesis.

Additionally, Google's recent press release and comments on the earnings call indicate that total queries, total queries from Apple devices, and total commercial queries are all still growing. Earlier this year, I paid a court reporter for access to transcripts from Google's Remedy trial vs. the DOJ. The following is an excerpt from Liz Reid, a Google executive, when asked about the recent trends in the U.S. search market (May of 2025).

Q. Okay. Do you know today, how – do you have an estimate or understanding as to how many queries have grown at Google Search since the introduction of AI Overviews?

A. It depends on the country and the --

Q. Just in the U.S.

A. Yes. Generally this is confidential, but I assume we are going to share here?

Q. I'm sorry. This is confidential information?

A. Yes.

MR. SMURZYNSKI: Your Honor --

THE WITNESS: How much our traffic has grown.

MR. SMURZYNSKI: – I understand Ms. Reid's concern, but we determined it is okay.

THE WITNESS: Okay. In the U.S. it's in the 1 1/2 to 2 percent space.

The evidence we have to date (while admittedly early) is supportive of the thesis that while Google's share is decreasing, the TAM is expanding, and the absolute volume of Google searches is flat to up three years after the release of ChatGPT. LLMs/AIs are capturing the vast majority, if not all, of the incremental growth. This is logical. Increased "search utility" (via LLMs) should result in more total search volume. If computers "get smarter" and become better at answering our questions, we will simply spend more time "talking" to computers.

- Google's AI Advantages

Google has many advantages that are often underappreciated with respect to the business's competitive position.

First, Google has incredible product distribution, with 15 separate products having surpassed 1 billion users. Search, Maps, Gmail, Drive, Android, YouTube, and Google Play are some of the most frequently used digital services in the world. Microsoft's primary competitive advantage over the past 20 years has been its enterprise distribution. It's why Internet Explorer beat Netscape, Microsoft Office beat WordPerfect/Lotus123, Teams beat Slack, Teams is beating Zoom, and Azure is growing so rapidly.

While consumers are often less sticky than enterprise customers (due to the collective action problem of getting everyone at an enterprise to switch to a new digital service/software at once), there are still significant advantages afforded to Google due to it's broad product distribution. In fact, we are already beginning to see this bear fruit. Google has implemented both AI Overviews and AI Mode in search, with overwhelmingly positive consumer reviews to date. In fact, these changes have been so impactful that they're beginning to change search behavior, driving increased query length. This is unquestionably positive. The reason the number of 3-to-4 keyword queries is increasing is that people now feel comfortable that AI will allow their more complex queries to be answered.

This is a prime example of the pie growing. These queries previously didn't exist. They were questions someone had pop into their head, they thought about searching for it, and ultimately decided "eh, that's too hard of a question for Google." We now have tangible evidence that AI Overviews is reducing that friction. And due to Google's product distribution, they've been able to layer this functionality into the traditional search engine result page (SERP), rather than needing to go acquire new customers.

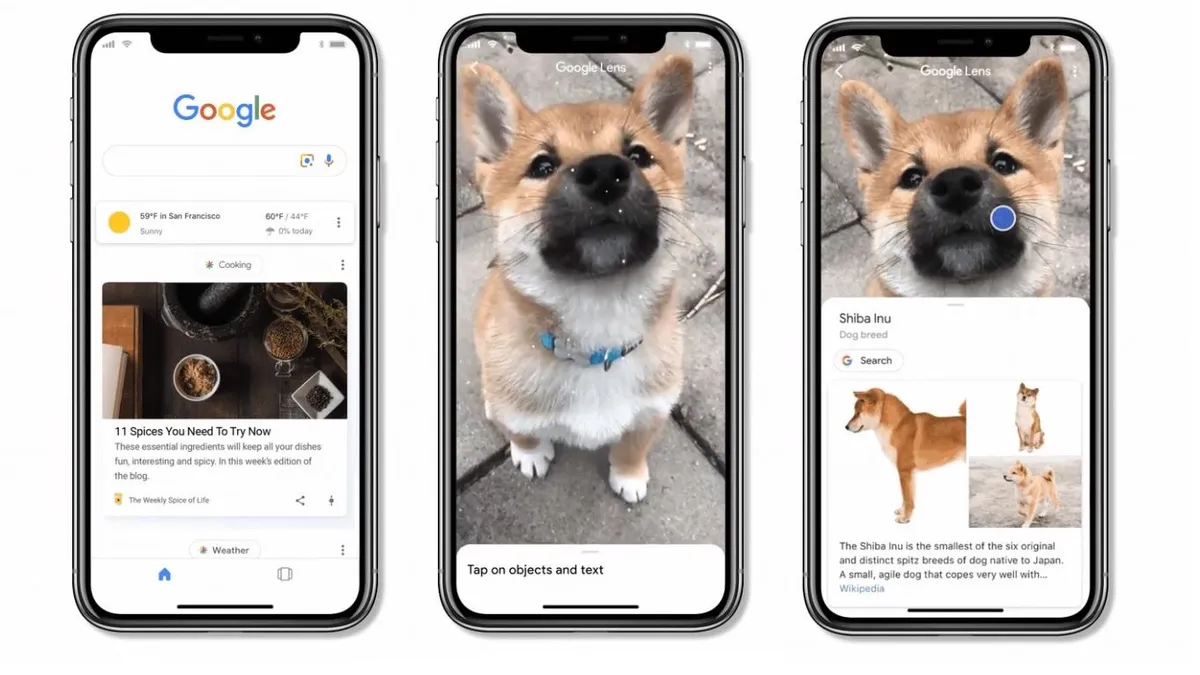

Google's success with Google Lens provides another example of how search is evolving. Google mentioned that Lens was responsible for 20B monthly queries in October of 2024, and in April of 2025 management said that Lens monthly queries had grown 5B since October. And now this past month (July 2025), they said that Lens was growing 70% Y/Y. Going from 20B Lens queries in October to 25B Lens queries in April implies ~56% annualized growth (on rounded numbers). This quarter's commentary implies that Lens growth is accelerating (70% growth – although this may just be a byproduct of them giving us rounded numbers).

This is happening on a base of roughly 425B total monthly queries (Google announced in March that they had surpassed 5 trillion annual queries). So as of July we now have ~7% of the query base (I'm estimating 30B monthly Lens queries as of July) growing 55–70%. Accounting for the fact that Lens likely skews slightly more towards commercial queries vs. traditional search based on comments on the Q3 call (1 of 4 lens queries = commercial) and what we know from the DOJ trial (20% of all queries show an ad) – we likely have 8–9% of commercial queries growing ~60%. That's a ~500bps contribution to growth. Now, they’ve given disclosure that the "majority" of Lens queries are incremental on the last few calls. Assuming a range of 50% to 100%, we can estimate that the net contribution = 250–500bps of a tailwind to commercial queries. This example shows how a small, rapidly growing product like Google Lens can provide a meaningful tailwind to total commercial queries for Google.

Second, there has been an increasingly popular refrain in the AI world that "models are a commodity." The core of this premise, fueled by the impressive results out of the Chinese AI lab DeepSeek in December of 2024, is that any leading-edge model can quickly be replicated by a host of copycats. Effectively, once the frontier has been pushed out (often at a significant cost), others can quickly use the output of the frontier model to train similarly capable models at a much lower cost. Thus, there is no defensible IP behind these models.

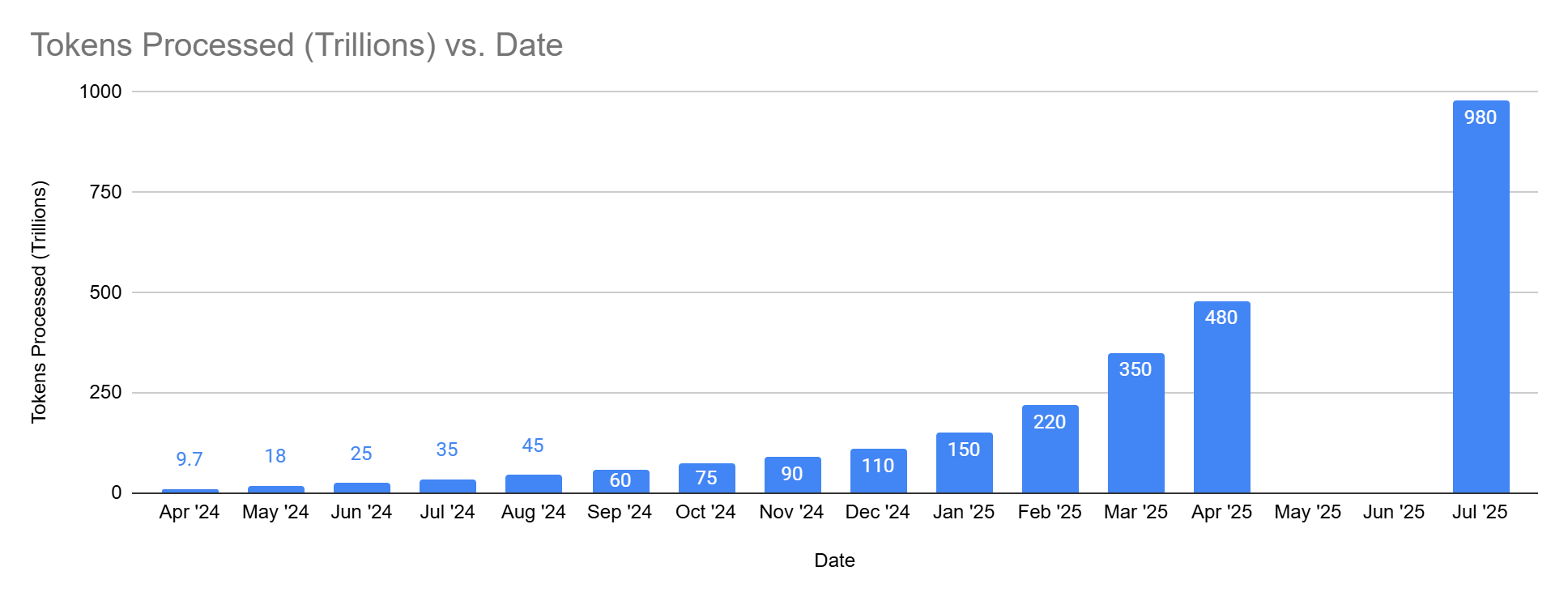

I think this premise is interesting to analyze in the context of the following image. I've used data Google released during Google I/O in April, combined with recent disclosures on the Q2 earnings call, to track Google's token processing over time.

You can see the growth has not been linear. Growth accelerated in ~February, which is when Gemini 2.0 was introduced.

[Note: I most of wrote the below (with respect to the commoditization of models) before the introduction of GPT5, but I believe all of the below logic still holds.]

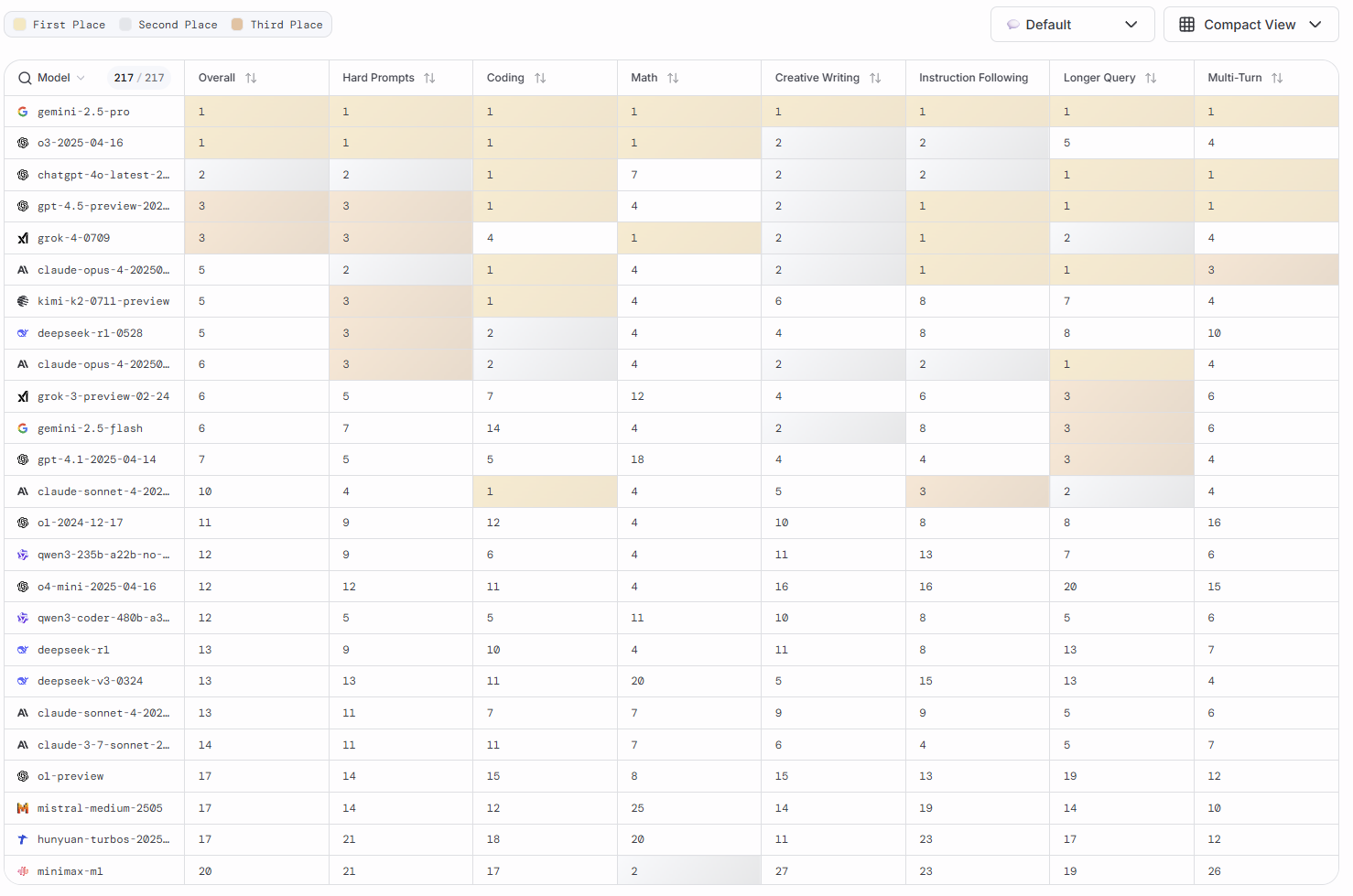

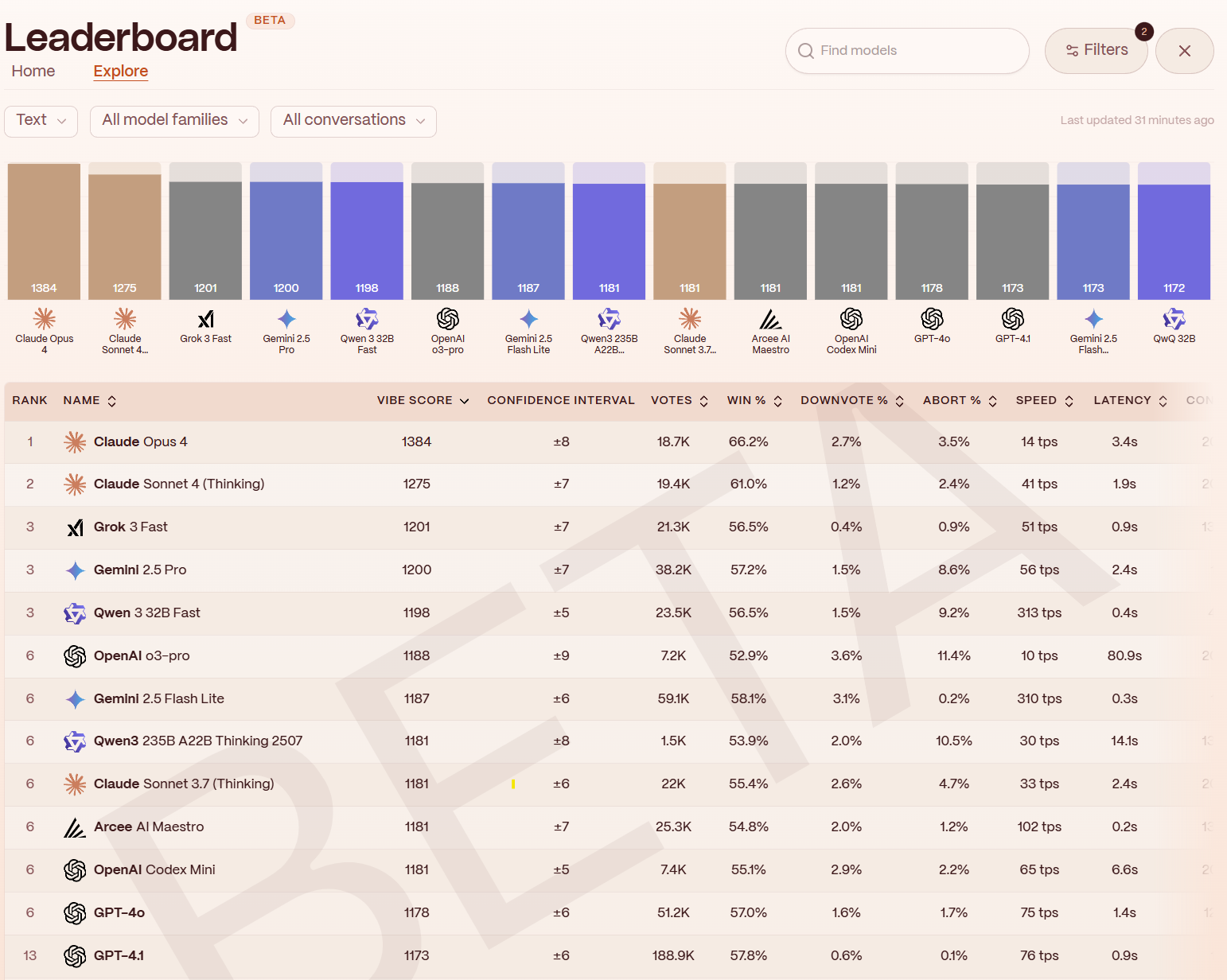

The past few months have made me increasingly less convinced that "models are a commodity." At this point, the top of the model leaderboards are dominated by Google and OpenAI. Anthropic recently released Claude 4, specifically focused on coding, and it's currently tied with Gemini 2.5 on LMArena for web development (and is lagging in everything else). While impressive, it's not clear Claude 4 materially pushed the frontier (although Anthropic is finding product-market fit with coding agents). DeepSeek released a new model that is worse than o3/Gemini 2.5. Llama 4 missed the mark, and Zuckerberg is scrambling to write hundred million dollar checks to catch up. Grok 4 is an impressive launch, and arguably pushed the frontier on many leading-edge model evals, but doesn't appear to be holding up as well in the real world. At this point, for the most part, consumers can comfortably use ChatGPT or Gemini and operate under the implicit assumption that these two will either be at (or virtually tied at) the top of the leaderboard (see image of LMArena leaderboard and below Yupp text leaderboard below).

To reiterate, the argument for "models are a commodity" is that once a model is released, it is very easy to use that model to "train" a similar model at a fraction of the cost, effectively allowing people to catch up to the frontier for way less $. However, what if we are nowhere near the "final frontier"? If scaling laws continue to hold, then it likely won't be enough to play fast follower. Users will demand the most powerful models (and businesses will likely demand the most efficient model – taking into account per-token-costs). Even if ~10 years into the future we reach the "final AI frontier," and everyone else can simply distill the best model cheaply... users will have likely spent the intervening 10 years cementing user behavior and providing their personal data to the providers with the best models along the way.

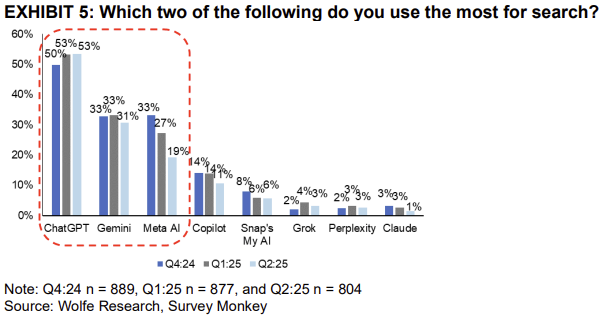

I think we are seeing this shape up in user behavior. For the most part, Gemini and ChatGPT are far enough ahead of the competitors (Llama, Claude, DeepSeek, Grok, etc.) that users are gravitating to one of those two as their "daily driver." I've seen at least ~5 different surveys that looks like the below, with ChatGPT a clear #1 with Gemini solidifying itself as the second most popular LLM. While Claude and open-source models may be having more success in enterprises, consumers are primarily gravitating towards ChatGPT or some combination of Gemini/AI Overviews.

We are seeing this behavior on an accelerated timeline with LLM coding assistants (Cursor, Windsurf, etc.). Eighteen months ago, AI founders sold a clean unit-economics story:

"Model costs will fall ~10× a year as models commoditize, so we can charge $20/month for “unlimited,” eat the losses now, and ride the curve to 90% margins.

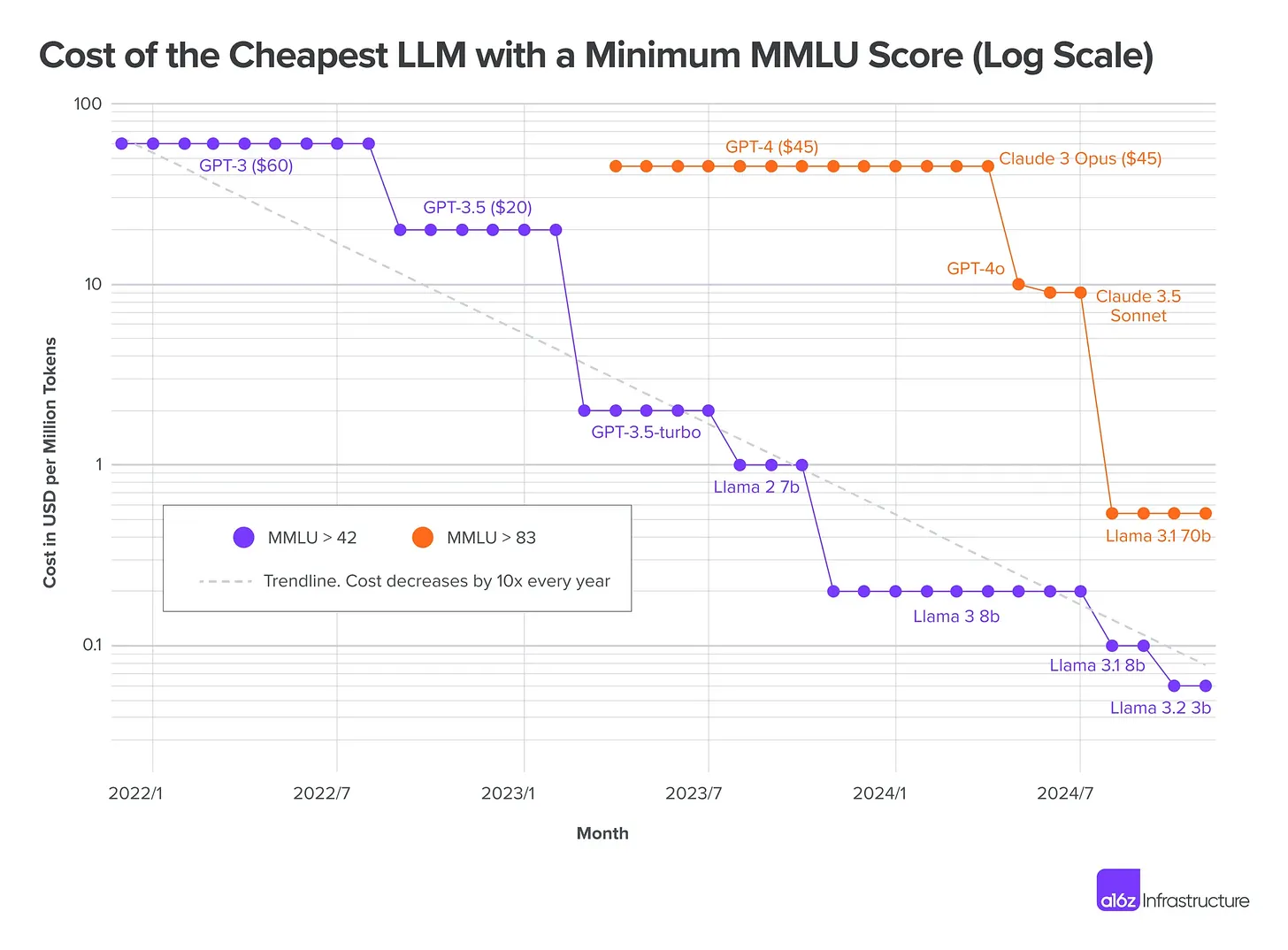

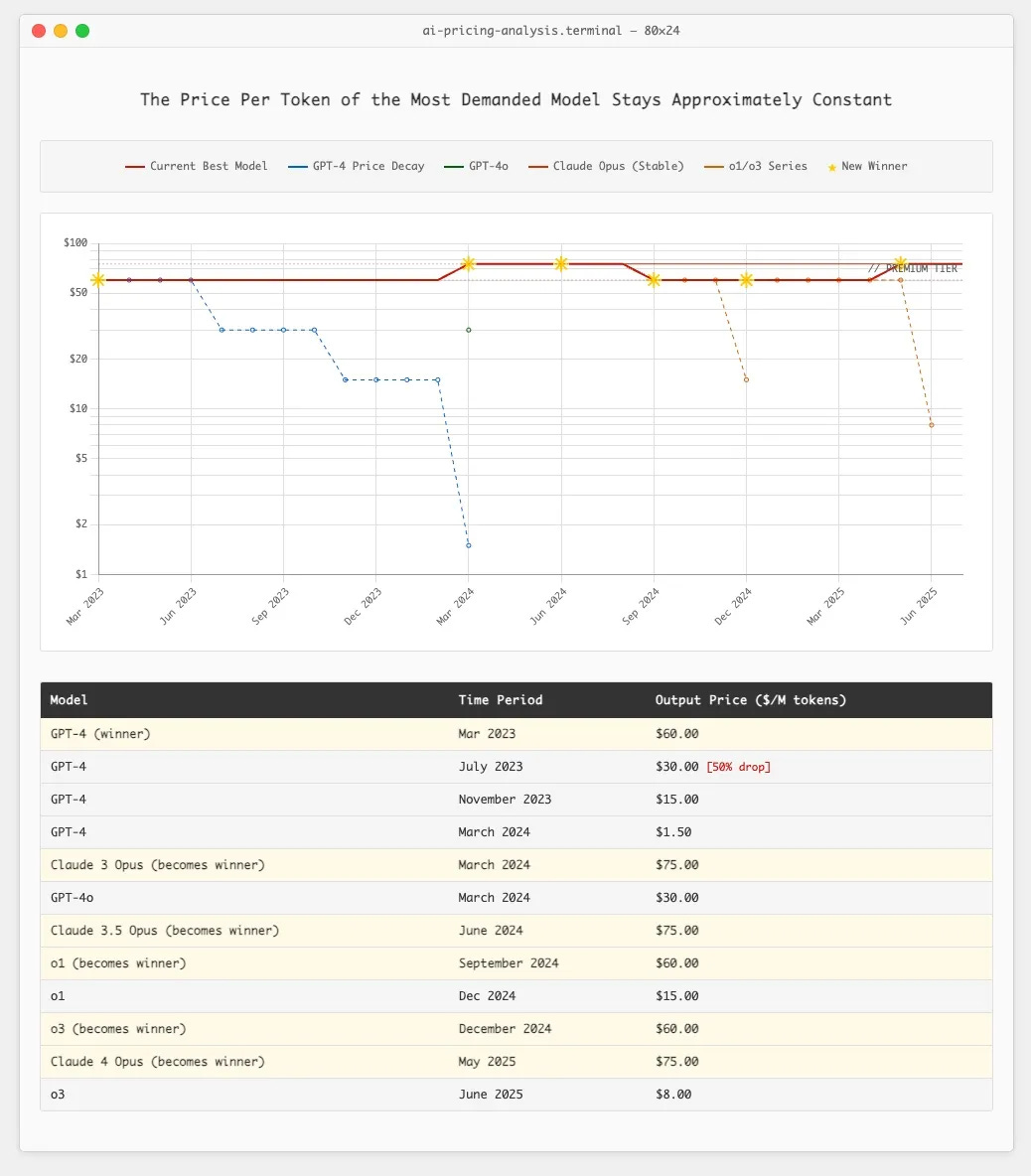

It seemed relatively foolproof. Cursor, Windsurf, etc. raised on this playbook and heavily subsidized power users, with the expectation that per token costs would just keep decreasing (see chart).

This hasn't happened though. Yes, older models get cheaper—but users dump them. GPT-4 immediately replaced 3.5 for most Cursor users, despite being ~26× pricier; Claude 3 Opus later grabbed share from the weaker GPT-4, even after GPT-4 price cuts. The models people actually want keep landing around $60–$75 per million tokens, regardless of how cheap the last generation becomes. This doesn't look like a commodity; this looks like a near insatiable desire for more intelligence (a.k.a. ability to do work).

This phenomenon reminds me of something Jeff Bezos said in Amazon's 2017 Shareholder Letter:

One thing I love about customers is that they are divinely discontent. Their expectations are never static – they go up. It’s human nature. We didn’t ascend from our hunter-gatherer days by being satisfied. People have a voracious appetite for a better way, and yesterday’s ‘wow’ quickly becomes today’s ‘ordinary’.

If you buy the premise that models aren't a commodity, and that pushing the frontier matters because there are positive knock-on effects with respect to user growth from having the best models (because the desire for intelligence is insatiable), then Google is incredibly well positioned vs. other players in the space – including OpenAI.

- Google Search throws off tremendous amounts of cash to fund the necessary investment in training/inference, whereas OpenAI has to raise outside capital to fund its investments

- Google likely still has the best collection of AI researchers in the world (Demis & DeepMind Team, John Jumper, Iason Gabriel, Noam Shazeer, etc.). Remember – the "T" in ChatGPT stands for "Transformer," an architecture developed at Google in 2016-17

- Google has the distribution (6 products with 2B users; 15 with 1B users)

- And potentially most importantly, due to Google's vertically integrated infrastructure (TPUs, most efficient data centers in the world, custom networking stack, proprietary software stack/optimizations), Google has 2–5x lower costs to train and run inference over a model vs. OpenAI. The more this becomes an arms race, the more likely Google is to win.

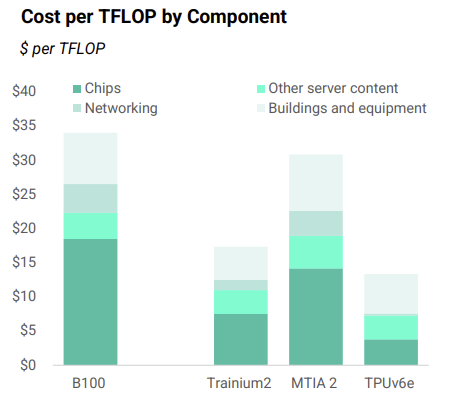

To elaborate on that last point (as it is often underappreciated), Google has been working on its custom AI accelerator chip, the TPU (tensor processing unit), for over 10 years now. Google is able to run its internal training and inference workloads on TPUs vs. using comparable Nvidia GPUs. This allows Google to avoid paying the "Nvidia tax" that every other company must pay. New Street Research estimates that the 6th generation TPU costs Google ~$13/TFLOP (a measure of compute power) vs. an equivalent Nvidia chip at ~$33/TFLOP.

As a byproduct of this, if OpenAI/Anthropic/Grok spend $30B on Nvidia GPUs, Google only needs to spend $10B. This is a massive competitive advantage, and is part of the reason why OpenAI is beginning to partner with Google to test using TPUs for itself. The more expensive these models become, the greater Google's advantage is.

This recently announced partnership between OpenAI and Google's cloud business also begs the question: if Google, who has the best information out of anyone regarding the competitive threat LLMs pose to their Search business, is willing to partner with OpenAI to give it increased capacity, does that not signal internal confidence from Google re: its prospects in Search?

- Sum Of The Parts (SOTP)

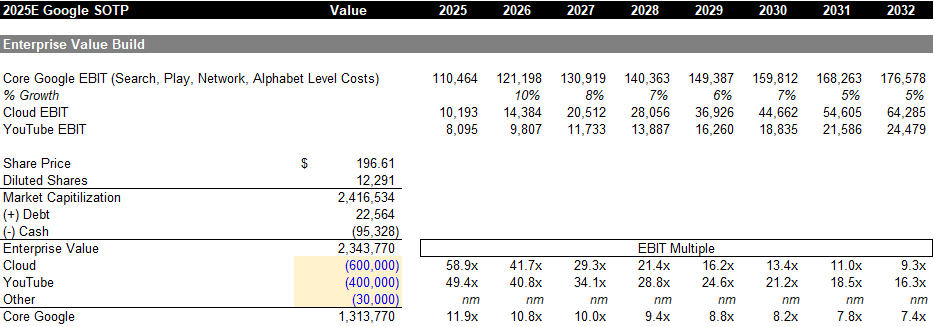

Below I am showing my SOTP math for Google. I break the business down into 4 parts: Google Cloud, YouTube, Other Bets, and Core Google.

- I value Google Cloud (GCP) at ~$600B, which is approximately ~9x forward revenue. For reference, ORCL trades at ~12.0x revenue and MSFT trades at ~12.5x revenue. Both are more profitable and growing healthily (but not as fast as GCP). Out of all the hyperscale cloud businesses (AWS, Microsoft's Azure, Oracle Cloud, GCP, etc.), I am most optimistic about GCP. I'll save the ink, but a lot of my bullishness comes from some of the infrastructure advantages GCP possesses due to its lead in custom silicon (TPUs)

- I value YouTube at ~$400B. This works out to about 40x 2026 EBIT. This is a healthy multiple, however, YouTube is probably the most desirable asset in the media space with one of the deepest moats. For reference, NFLX trades at ~40x EBIT and the research firm MoffetNathanson recently put out a piece valuing YouTube at ~$550B

- I ascribe $50B of value to "other bets." I think this is a fair estimate considering Waymo raised at a $46B valuation in October of 2024 and has been gaining significant traction since then. This ascribes no value to Verily, etc.

- The remainder ("Core Google") is set to do ~$120B of NTM EBIT, burdened by $15B of model/LLM and other administrative costs. EBIT would be ~$135B without those costs

At an enterprise value of ~$2.35T (on a recent stock price of $197), I estimate that "Core Google" trades at ~11x forward EBIT (when I increased the position size at $155, the implied forward EBIT multiple was ~8.0x). This is for a business I forecast to grow EBIT at a 7.0% CAGR over the next 5 years.

For reference, some companies that trade between 7-9x forward EBIT include: Magna International (auto parts manufacturer), HP Inc., United Airlines, Global Payments, Western Digital, the major gold miners (Newmont, Kinross, etc.), Principal Financial Group, The Hartford Insurance Group, Omnicom, Toll Brothers, and Tenet Healthcare. eBay and PayPal, both structurally challenged businesses, trade for 12.5 and 10.5x EBIT, respectively. You may think Google's Search business is doomed; however, at a price of $155, the market was already pricing that in.

On a sum-of-the-parts basis, I think the stock is still too cheap. The value of Cloud + YouTube covering > 45% of the enterprise value provides a margin of safety and downside protection.

Ultimately though, my projected IRR is based on forecasted earnings growth, rather than a sum-of-the-parts methodology. I forecast a 5Y IRR of ~14% from a recent price of $197. This is driven by normalized EBIT growing at an ~11% CAGR from $116B in 2024 to $245B in 2031 combined with a ~3% FCF yield and a 20x normalized EPS exit multiple (in line with today's multiple – so no contribution or detraction from change in the multiple).

The last thing I'll note on Google is that the range of outcomes remains quite wide. Even my 14% IRR is an "expected value" vs. a true point-estimate. The IRR could be 20% or -5% over the next five years. I didn't even address the looming antitrust case in this short write-up, and the AI/LLM landscape is a rapidly evolving one. I've talked to a number of friends who've used Google as a funding short this past year (in order to get long Meta), under the assumption that Google is facing too many search- and regulatory-related headwinds to materially outperform over the near-term. I understand that view. Google is a hard stock to own. And while the forward valuation of ~19x GAAP EPS almost certainly prices in some skepticism around terminal value, the stock is likely to continue to whipsaw around on headlines. It's a stock where you run the risk of looking very foolish if ChatGPT eventually begins to not only steal share, but causes a decline in Google's total queries. I'll feel particularly dumb as someone who is a power ChatGPT user if that ends up being the case.

However, tension often presents opportunity. The fact that Google is so difficult to own itself may be responsible for a potential mispricing. You make a little money by being right, but you make a lot of money by being right when others disagree with you. I liked the risk/reward at $155 better than I do at ~$200. However, in a world of low expected returns, I personally feel comfortable with a position.

[Note: We're expecting a ruling in the DOJ vs. Google (Search) Remedy trial any day now. My expectation is that insofar as Judge Mehta goes "lenient" in one remedy aspect, he will be heavy-handed in others. For example, if he calls for a revenue share ban between Apple and Google, he won't require a Chrome divestiture. I would not be surprised if the stock is off on the day of the ruling. However, I think having the ruling out could act as a clearing event for the stock, as I know some investors who are unwilling to "get in front of" the remedy ruling. It's also worth noting that the ruling is virtually certain to be appealed.]

Disclaimer: Nothing here is investment, legal, tax, or financial advice. It’s opinion for educational purposes only—not a recommendation to buy or sell any security. I may hold positions in securities mentioned and may change them at any time without notice. All discussion regarding investments is in sole reference to my personal investment accounts. Any discussion of performance refers solely to my personal investment accounts, is unaudited and incomplete, and is provided for illustration only. It is not marketing, advertising, or a solicitation for any advisory service, fund, or security. Past performance is not indicative of future results. Do your own research.